Problem

The modern news ecosystem is fractured. Readers either consume news from a single outlet — inheriting that outlet's biases unaware — or face the exhausting task of manually cross-referencing sources. Bias isn't disclosed; it's baked in.

- Invisible bias: Most news platforms don't tell users that a story is being covered exclusively by left-leaning or right-leaning outlets, creating a distorted picture of consensus.

- Blindspot blindness: If 90% of outlets ignore a story, readers simply never see it — not because it isn't real, but because it doesn't fit the dominant narrative.

- No AI-assisted fact checking: Distinguishing claims from facts requires effort most readers can't sustain; there's no accessible, low-friction way to verify what's real.

- Conflict coverage opacity: Ongoing conflicts (Gaza, Ukraine, etc.) are reported with wildly inconsistent casualty counts, event descriptions, and framing — with no tool to surface the discrepancies.

Research & Discovery

- Competitive analysis: Ground News is the closest comparison — Asha News was explicitly designed as "Ground News, enhanced with AI analysis." Key gaps identified: no AI verification layer, no developer/agent API, no conflict analytics module.

- Media bias landscape: Reviewed AllSides, Ad Fontes Media, and Media Bias/Fact Check rating methodologies to understand how bias is currently categorized and visualized.

- AI provider landscape: Evaluated Groq (speed), OpenRouter (model diversity), Perplexity (web-grounded factual verification), and OpenAI (general analysis) — designed the backend to support configurable provider selection.

- Target audience: Civically engaged readers who suspect bias but lack tools; journalists and researchers needing structured multi-source comparison; AI agents needing structured news digests via API.

Key insight: The AI layer isn't just a feature — it's the differentiator. Bias visualization without AI verification is Ground News. Add AI claim-checking and structured conflict analytics, and the product enters a different category.

Solution & Approach

Asha News clusters articles by story, visualizes the political lean and credibility of sources covering each cluster, and adds an AI layer for claim verification, Q&A, and conflict analytics.

Four primary surfaces:

- Home Feed — Clustered story digest with bias indicators per cluster

- AI Checker — Claim and article verification with configurable AI providers

- Markets — Financial instrument news with ticker integration

- Conflict Monitor (Beta) — Event-level analytics for ongoing conflicts: casualty counts, systems used, official announcements — across sources

Key architectural decisions:

- Multi-provider AI backend: Provider abstraction layer supports Groq, OpenRouter, Perplexity, and OpenAI — switchable via config. Avoids lock-in and enables cost/quality tradeoffs.

- Content-hash caching: Cached AI responses keyed on article cluster content hashes — avoids redundant API calls for unchanged stories, improving performance and reducing cost.

- Versioned API (

/api/v1): Agent-friendly endpoints with OpenAPI spec (GET /api/v1/openapi), structured digest payloads, and share tokens — designed for AI agent consumption from day one. - Strict auth mode:

STRICT_AUTH=truedefault; legacy Directus routes disabled by default (LEGACY_DIRECTUS_ROUTES_ENABLED=false) — clean v1 surface from the start. - WCAG 2.1 AA: Accessibility compliance baked in, not bolted on.

Features & Functionality

Core Features:

- Home Feed — Personal/public feed with story clusters, bias indicators, and unified filters

- AI Checker — Claim verification + article fact-check workflows with multi-provider AI

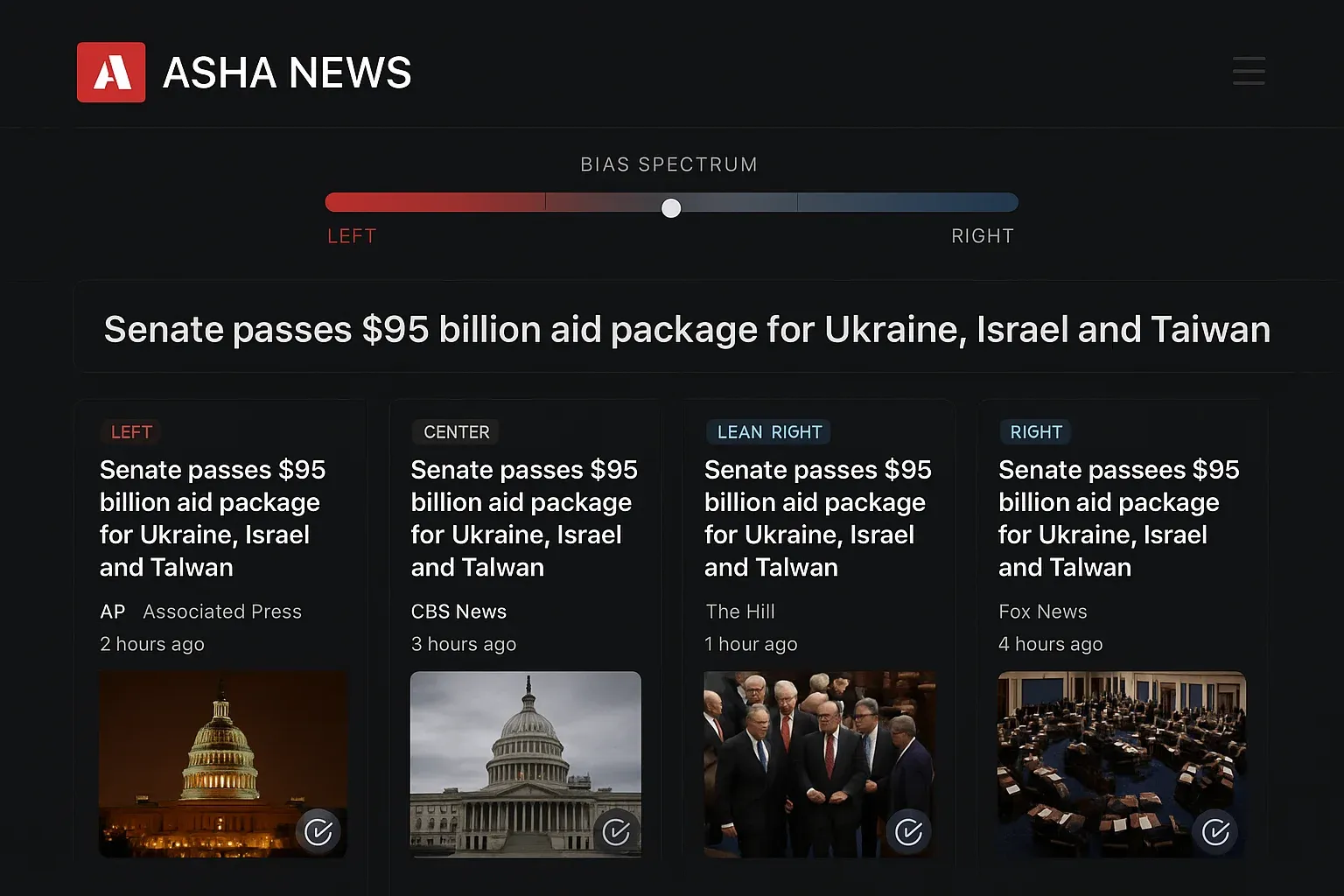

- Bias Visualizations — BiasBar (horizontal distribution), BiasIndicator (compact circular), CoverageChart (donut), CredibilityMeter (star ratings)

- Story clustering — Similarity-threshold-based clustering with admin-configurable parameters

- Markets module — Instrument ticker, price charts, related instrument news

- Conflict Monitor (Beta) — Event-level counts, casualty comparisons, released IDs, systems used, official announcements

- In-app Wiki —

/wiki/conflict-ops,/wiki/ai-checker,/wiki/markets,/wiki/agent-api - Admin panel — Fine-grained clustering controls, story presentation toggles, autonomy/source candidate management

- Light/dark mode — User preference persisted

- Mobile-first responsive — 4-breakpoint layout (mobile 320-767px → wide 1440px+)

Hero Features:

AI Checker: Users paste a claim or article URL. The backend routes the verification request through the configured AI provider (Groq for speed, Perplexity for web-grounded results), returns a structured verdict with supporting sources, and caches the result by content hash. Q&A, fact-check, and analysis modes are all supported.

Bias Visualization System: Every story cluster displays a visual breakdown of left/center/right source distribution. The color system: Red (left), Green (center), Blue (right), Purple (mixed). Credibility ratings per source from integrated bias databases. The combination gives readers immediate awareness of coverage skew without requiring them to know every outlet.

Conflict Monitor: A structured analytics module purpose-built for ongoing conflicts. Tracks event-level data points across sources: reported casualty numbers, systems/weapons referenced, official announcements. Surfaces discrepancies across sources in a normalized comparison view. Admin-gated ingestion pipeline with human review queue for source candidates.

Agent API (/api/v1): Structured REST endpoints with OpenAPI spec, digest payloads, share tokens, and cluster search — designed to be consumed by autonomous AI agents. A developer or agent can pull a structured news digest without scraping or parsing HTML.

Design & UX

Design philosophy:

- Clarity over density — bias data must be instantly readable, not buried in dropdowns

- Dark and light modes both feel intentional (not an afterthought)

- Accessible first: WCAG 2.1 AA compliance, keyboard navigation throughout

Color system:

- Light: Clean whites + slate grays

- Dark: Deep slate backgrounds + high contrast text

- Bias palette: Red (left), Green (center), Blue (right), Purple (mixed) — consistent across all visualization components

Typography:

- Inter with system fallbacks

- Headlines scale from 24px (mobile) to 36px (desktop)

- Line heights optimized for readability (1.2–1.6)

Key UI decisions:

- Bias indicators appear at both cluster level (overview) and source level (detail) — users can scan or drill in

- Conflict Monitor uses a structured table/comparison layout, not prose — data is comparable at a glance

- Admin clustering controls use sliders and toggles with live preview semantics — no cryptic config files

User Flow — AI Claim Verification:

flowchart TD

A["User enters claim or article URL\nin AI Checker"] --> B["Backend checks content hash\nagainst cache"]

B --> C{Cache hit?}

C -->|Yes| D["Return cached verdict\n(0 API cost)"]

C -->|No| E["Route to AI provider\n(Groq / Perplexity / OpenRouter / OpenAI)"]

E --> F["AI returns structured verdict:\n- Rating (True/False/Mixed/Unverified)\n- Supporting sources\n- Confidence score"]

F --> G["Cache result by content hash"]

G --> H["Display to user with\nsource citations"]

D --> H

Technical Architecture

Tech Stack:

| Layer | Technology |

|---|---|

| Frontend | React 18, TypeScript, Tailwind CSS, React Router |

| Backend | Node.js, Express, TypeScript |

| Database / Auth | Supabase (PostgreSQL + Auth) |

| AI Providers | Groq, OpenRouter, Perplexity, OpenAI (configurable) |

| Deployment | Netlify (frontend), VPS (backend/workers) |

| API spec | OpenAPI 3.0 (auto-served at /api/v1/openapi) |

| Testing | Content-hash cache test suite, smoke tests, security gate |

| CI/CD | GitHub Actions (release check: lint + test + build + security + smoke) |

Architecture Overview:

graph TB

subgraph "Frontend (Netlify)"

HOME["Home Feed"]

CHECKER["AI Checker"]

MARKETS["Markets"]

CONFLICTS["Conflict Monitor"]

ADMIN["Admin Panel"]

end

subgraph "Backend API (VPS)"

V1["REST /api/v1\n(Agent-friendly)"]

CA["Conflict Analytics\n/api/conflicts/*"]

AUTH["Auth + RLS\n(Supabase)"]

CACHE["Content-Hash Cache"]

end

subgraph "AI Layer"

GROQ["Groq"]

OR["OpenRouter"]

PPX["Perplexity"]

OAI["OpenAI"]

end

subgraph "Data"

DB["Supabase/Postgres"]

WORKERS["Background Workers\n(ingestion, clustering)"]

end

HOME --> V1

CHECKER --> V1

MARKETS --> V1

CONFLICTS --> CA

ADMIN --> V1

V1 --> AUTH

V1 --> CACHE

CACHE -->|"cache miss"| GROQ

CACHE -->|"cache miss"| OR

CACHE -->|"cache miss"| PPX

CACHE -->|"cache miss"| OAI

V1 --> DB

CA --> DB

WORKERS --> DB

Notable technical challenges:

Multi-provider AI abstraction: Rather than hardcoding a single AI provider, the backend routes requests through a provider abstraction. This allows Groq to be used for latency-sensitive fact checks while Perplexity handles web-grounded verification. Configurable at environment level.

Content-hash caching: AI verification calls are expensive. Caching by content hash means that if a cluster's source articles haven't changed, the same verdict is returned instantly. The test suite specifically exercises Q&A, fact-check, and analysis endpoints to verify cache hits.

Conflict Monitor ingestion pipeline: Conflict data requires human-in-the-loop governance. The system has a source candidate queue, human review endpoints (/api/conflicts/reviews/:eventId), and approval/rejection flows — preventing unvetted sources from contaminating the analytics.

Process & Iteration

v1 surface policy decision: Early versions had more navigation surfaces. Deliberately trimmed to four primary surfaces (Home, AI Checker, Markets, Digest) to reduce cognitive load and enforce a clean v1 contract. Legacy Directus routes disabled by default.

Conflict Monitor evolution: Started as a simple event counter. Grew into a full ingestion pipeline with source candidate governance, human review queues, and autonomy controls — driven by the realization that conflict data quality requires more oversight than general news clustering.

API-first design: The /api/v1 surface was designed for agents from day one. This decision shaped the entire data model — structured payloads, versioned contracts, OpenAPI spec served dynamically.

Release discipline: A formal release checklist (RELEASE_CHECKLIST_V1.md) and rollback checklist (ROLLBACK_CHECKLIST_V1.md) were added after a deployment introduced a regression. CI release check gate: lint + tests + build + security + v1 smoke + authenticated smoke.

Outcomes & Impact

- Full platform: 4 primary surfaces, structured agent API, in-app wiki, admin panel

- AI provider flexibility: 4 supported AI backends — avoids lock-in, enables cost/quality control

- Conflict Monitor governance: Human review queue + autonomy controls — responsible AI-assisted conflict reporting

- Agent-ready API: OpenAPI spec auto-served; structured digest endpoints; share tokens for programmatic distribution

- (estimated) Bias awareness improvement: Readers seeing bias distribution per story cluster gain context unavailable on any other consumer news platform without manual cross-referencing

No public user metrics available. Qualitative differentiation: Asha News is the only news platform in this space that combines bias visualization + AI claim verification + structured conflict analytics + a developer-facing agent API in a single product.

Reflections

What went well:

- The multi-provider AI abstraction was the right call — Groq and Perplexity serve different needs and having both available without code changes is valuable

- Content-hash caching made AI verification feel fast; would not have been acceptable without it

- The agent API discipline (versioned, OpenAPI-served, structured payloads) is a genuine competitive advantage

What I'd do differently:

- Start the Conflict Monitor governance framework earlier — retrofitting a review queue onto an existing ingestion pipeline was more complex than building it in

- The legacy Directus route cleanup should have happened at project start, not after launch

Skills demonstrated:

- Full-stack product engineering (React, Node.js, Supabase)

- AI provider integration and abstraction patterns

- Responsible AI system design (governance, human review queues, content hash verification)

- API-first product design

- Media bias domain expertise